5 Powerful Types of Neural Networks: MLP,CNN, RNN, Auto Encoder, GAN

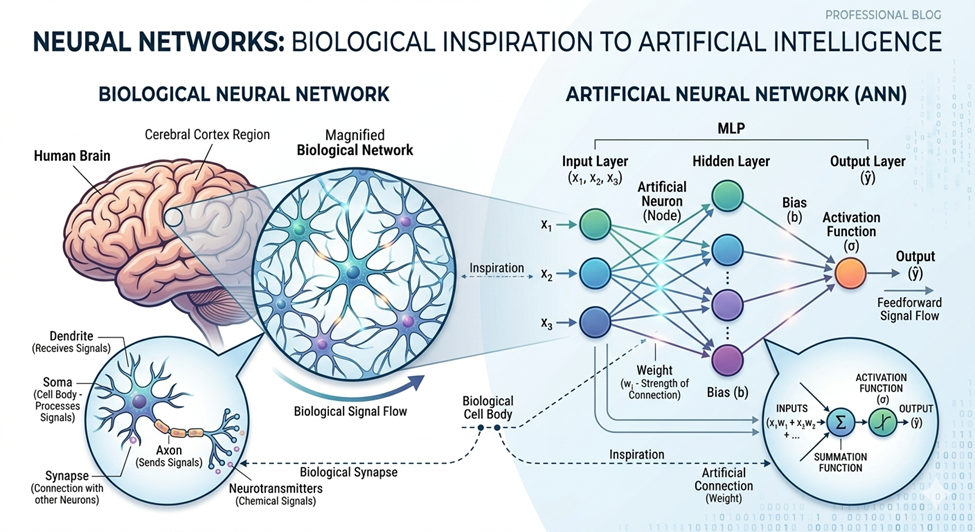

Neural Networks are machine learning models inspired by the human brain. They act as digital clone of human brain due to their structure of interconnected nodes (neurons) and combination of multiple layers (input layer, Hidden layers and output layers) to process data, identify patterns and predict outcomes.

Unlike traditional programming that follows strict if/else pattern(e.g., if condition is true, output 1; else output 0), a neural network analyzes raw data and predicts future outcomes based on learned patterns, rather than depending totally on hard-coded conditions.

Types of Neural Network

Neural Networks are loosely modeled after human brain however this is true for just some extent. Yes, they work similarly! For example, in an autonomous vehicle, they take input from sensors (like a human seeing an obstacle), process the data in hidden layers (like the brain thinking), and give an output action, such as reducing the car’s speed (human reflex).

However, they are not all the same in structure. Different models use different algorithms and techniques to handle specific types of data. Neural Networks are categorized into the following main types:

- Multi-Layer Perceptron

- Convolutional Neural Network

- Recurrent Neural Network

- Auto Encoder

- Generative adversarial network

Feed Forward Neural Network

Feed Forward Networks are simplest form of neural networks. They are unidirectional networks, data starts from input layer, process in hidden layers and exiting result from out put layers without any loops. They are supervised models, trained through back propagation and are ideal for image classification, pattern recognition and regression tasks in structured data.

Multi-Layer Perceptron (MLP)

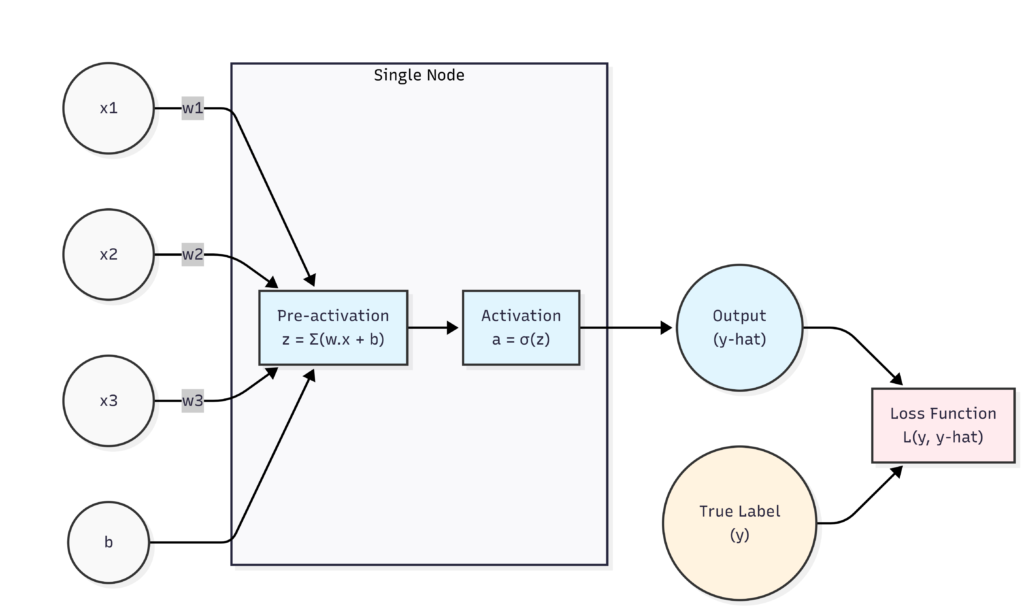

Multi-Layer Perceptron is feed forward neural network that works on linear data to classify it in two regions. MLP is a binary classifier and works on linear data in its simple form (MLP can also handle nonlinear data through bunch of perceptron and back propagation techniques) and separate the data by a line giving result in 1 and 0 regions.

Input X1 and x2 are input nodes and route information to the processing nodes (Hidden layers).

Weights These are multipliers and actually value of weights decide the preference of input in data processing. If the value of weight is large for a node then this input will pose more impact on output compare to the input with low weight value. The value of weights is predicted during training of perceptron

Bias Bias is also a trainable element of MLP and act same as intercept in linear equation. It is used to shift the output differentiating line upward and downward during training of MLP to get accurate results.

The Pre-activation function (Z) The pre-activation function (z) is the weighted sum of the inputs and their dot product with weights along with bias function.

Activation Function The activation function is a mathematical function of Z that defines the working of pre-activation function. This would be step function, sigmoid, tanh or many others to regulate the preprocessing of data in desired output.

Loss Function Loss function describes the efficiency of working model as it calculates the difference in predicted output and actual desired output.

Applications:

- Pattern Classification: deciding of whether a message is spam or not

- Linear Regression: Estimation the stock prices, calculating and predicting real-state prices over time

- Image Classification: Normally CNN are preferred for image classification however MLP are used in foundational pattern recognition in image classification.

Convolutional Neural Network (CNN)

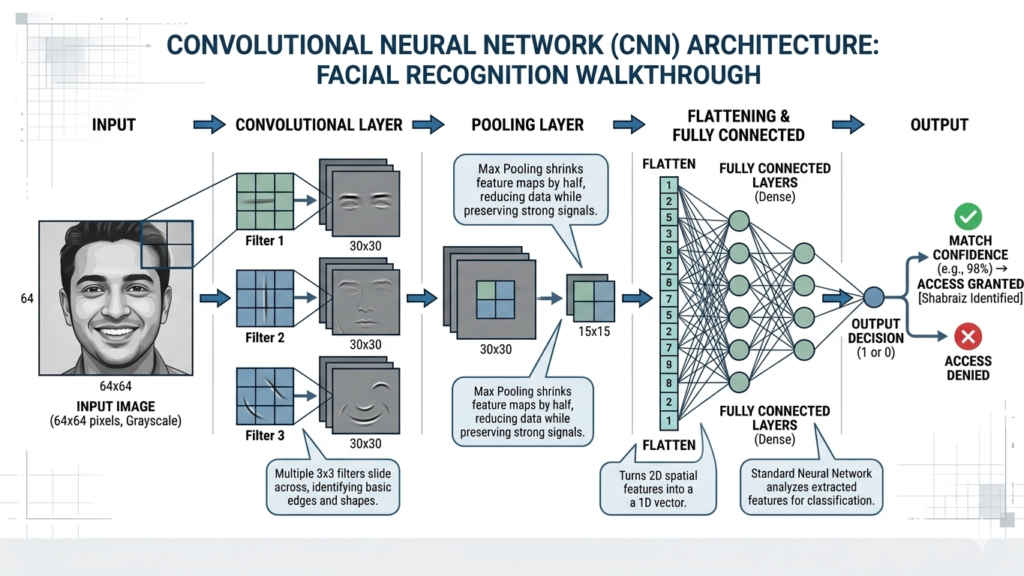

Convolutional Neural Networks are feed-forward networks that use multiple specialized layers to handle large datasets. They are widely used in computer vision and facial recognition. Unlike MLPs, which flatten data, CNNs process data spatially. They use filters to scan an image to find edges, shapes, and textures. These deep learning models are specifically designed to handle grid-like data, automatically learning hierarchical features as the data passes through the network.

Convolutional Layer This is the core building block. It uses trainable mathematical filters (kernels, typically 3×3 or 5×5 pixels) that slide over the image to detect patterns, edges, and textures.

Pooling Layer This layer reduces the computational load by downsizing the data. It acts as a sliding window that moves over the feature map, keeping only the most important information (usually by picking the maximum value) and discarding unnecessary background noise.

Fully Connected Layer At the end of the network, the FC layer takes the high-level geometric features extracted by the previous layers and performs the final classification.

Applications:

- Computer Vision and Object Detection: It power systems that identify, classify and detect images within images and videos.

- Medical Imaging and diagnosis: They analyze X-Rays, MRIs and CT scans in assisting to identify the condition like cancer, lymph node metastasis etc.

- Autonomous Vehicles: The CNN are central to self-driving car systems perception as they help in identifying signals, barriers and road markings to take decisions.

Recurrent Neural Networks: RNN

Unlike feed-forward networks (such as MLPs and CNNs) that have a strictly unidirectional flow, RNNs feature feedback loops. This unique architecture allows them to maintain an internal “memory.” By holding onto a record of past inputs, RNNs can travel through time steps to process sequential data. This makes them the standard choice for speech recognition, text translation, and time-series forecasting.

Types of Architecture:

- One-to-one: single input to single output

- One-to-Many: Image to text description that translate single 2D image to vectors.

- Many- to- One: Sentiment analysis of a sentence to define what the person wants to express in a behavioral word output from complete sentence. e.g. happiness, sadness, accomplishment, joy

- Many- to- Many: Machine translation ( e.g, English to Urdu) many words to many words translation.

Applications:

- LSTM Long Short Term memory: Standard RNNs have ‘short-term’ memory loss; LSTMs are the upgraded version that can remember information for a very long time

- Widely Used in NLP (Natural Language Processing)

- Example are SIRI and music generation.

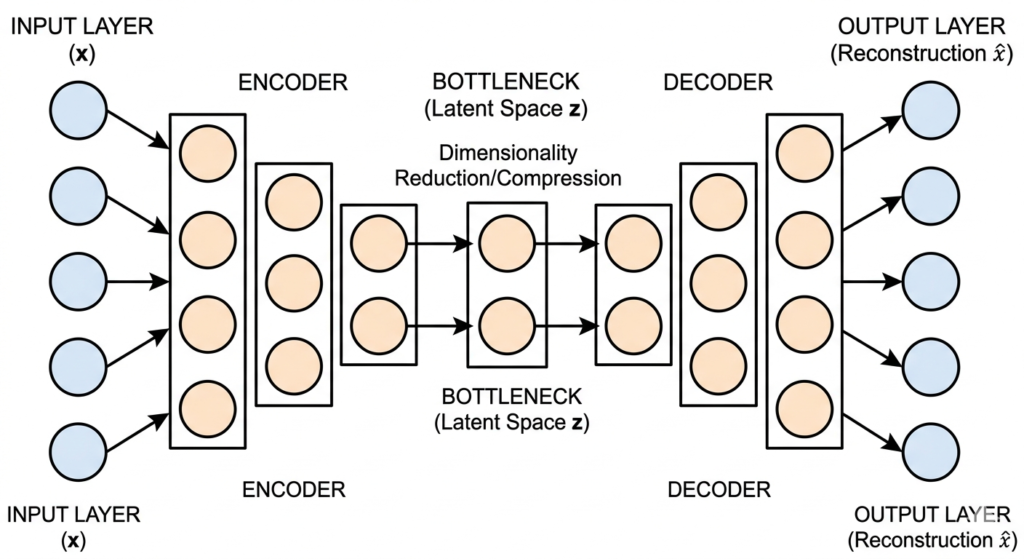

Auto Encoder

Autoencoders are neural networks specifically used to compress data without losing its core quality. They are unsupervised learning algorithms that force data through a tight bottleneck, learning to ignore noise and retain only the most essential features. Once compressed, the network mirrors the process to reconstruct the original input.

Architecture of Encoder

Encoder: This section compresses the input data into a smaller, more manageable form by reducing its dimensionality.

Bottle neck:This is the smallest layer of the network. It represents the most compressed version of the data, forcing the network to prioritize the most critical features.

Decoder:The decoder attempts to reconstruct the original input from the compressed latent vector. The network uses a loss function (like Mean Squared Error) to measure how perfectly the output matches the original input.

Applications:

- Dimensionality Reduction

- Anomaly Detection

- Image denoising

- Generative AI

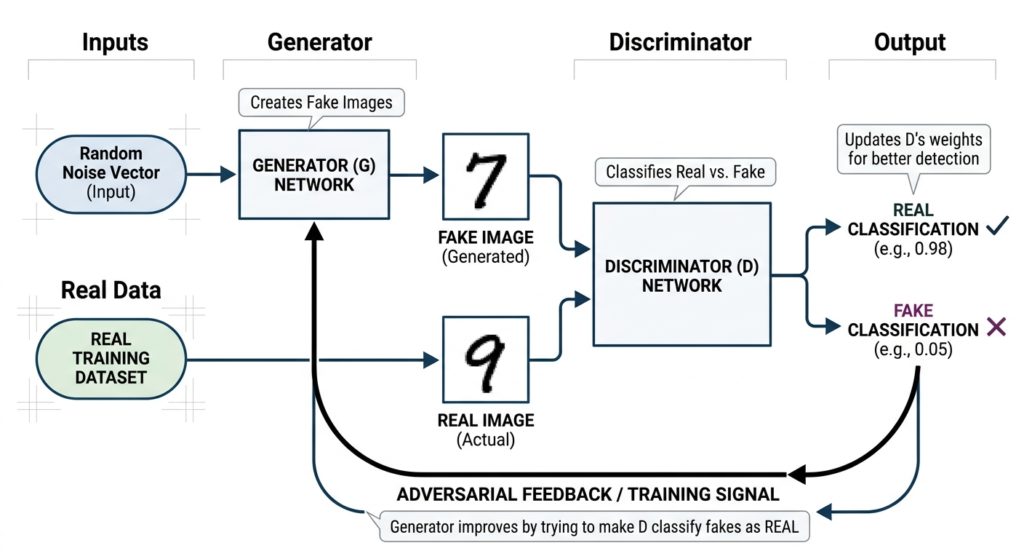

GAN Generative Adversarial Network

GANs represent the creative frontier of deep learning. Instead of just analyzing data, GANs can generate entirely new, synthetic data that does not exist in the real world (such as photorealistic human faces). A GAN consists of two competing neural networks locked in a continuous battle.

Input Generator: This network takes random mathematical noise and attempts to create fake, synthetic data. It generates thousands of outputs and forwards them to discriminator.

Real Data is the data set already available or fed in the discriminator for training. Discriminator uses this data to validate the creation of fake generator.

Discriminator This network is trained on a dataset of real images. Its job is to review the Generator’s creations and classify them as “Real” or “Fake.”

If the Discriminator catches the fake, it sends feedback to the Generator to improve its next attempt. This adversarial loop continues until the Generator is so perfect that it successfully fools the Discriminator. This technology is widely used in deepfakes, AI art, and synthetic voice generation.

The applications are in generation of Music and to generate stories

PRACTICAL EXAMPLES

MULTI LAYER PERCEPTRON (MLP)

The Problem Statement: You are a data scientist at a major bank trying to automate credit card approvals. You have structured, tabular data for each applicant: Annual Income, Credit Score, Age, and Current Debt. The MLP needs to weigh these four inputs, pass them through its hidden layers to find the mathematical patterns of a “safe” borrower, and output a strict decision: 1 (Approved) or 0 (Denied).

Cell 1: The Imports

import numpy as np

import matplotlib.pyplot as plt

from sklearn.neural_network import MLPClassifier

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_splitCell 2: Generating the Input Data

First, we generate a mock dataset of 500 credit card applicants. We split them into a training set (to teach the AI) and a testing set (to test it later).

# X = Applicant Features (Income, Credit Score)

# y = Target Output (0: Denied, 1: Approved)

X, y = make_classification(n_samples=500, n_features=2, n_informative=2,

n_redundant=0, n_clusters_per_class=1, random_state=42)

# Split data: 70% for training, 30% for testing

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

print(f"Data ready! Training samples: {len(X_train)}, Testing samples: {len(X_test)}")Cell 3: Building and Training the Model

Now we initialize the Multi-Layer Perceptron and call .fit(). This is the exact moment the neural network processes the inputs and adjusts its weights to learn the patterns.

# Build an MLP with 2 hidden layers (16 nodes, then 8 nodes)

mlp = MLPClassifier(hidden_layer_sizes=(16, 8), activation='relu', max_iter=1000, random_state=42)

# Train the network

mlp.fit(X_train, y_train)

# Test the network's accuracy on the 30% of data it has never seen

accuracy = mlp.score(X_test, y_test)

print(f"Training Complete! Model Accuracy: {accuracy * 100:.2f}%")Cell 4: Visualizing the Output

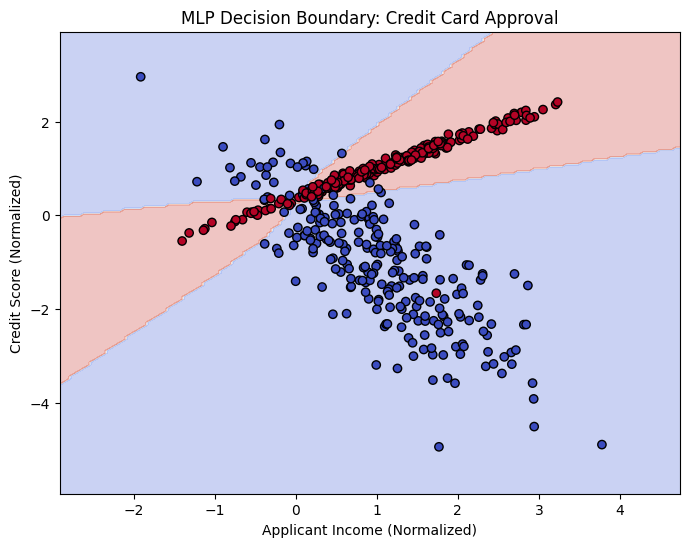

To prove the network learned, we ask it to predict the outcome for every possible combination of Income and Credit Score. We then plot the decision boundary it created.

# Create a mesh grid to map the "Approved" vs "Denied" territories

x_min, x_max = X[:, 0].min() - 1, X[:, 0].max() + 1

y_min, y_max = X[:, 1].min() - 1, X[:, 1].max() + 1

xx, yy = np.meshgrid(np.arange(x_min, x_max, 0.05),

np.arange(y_min, y_max, 0.05))

# The MLP predicts the outcome for the entire grid

Z = mlp.predict(np.c_[xx.ravel(), yy.ravel()])

Z = Z.reshape(xx.shape)

# Plotting the results

plt.figure(figsize=(8, 6))

plt.contourf(xx, yy, Z, alpha=0.3, cmap='coolwarm') # The AI's decision boundary

plt.scatter(X[:, 0], X[:, 1], c=y, edgecolors='k', cmap='coolwarm') # The actual people

plt.title("MLP Decision Boundary: Credit Card Approval")

plt.xlabel("Applicant Income (Normalized)")

plt.ylabel("Credit Score (Normalized)")

plt.show()

Conclusion As seen in the result, the MLP successfully divided the data into two distinct regions based on the input criteria. The blue zone represents the approved applicants, selected because their combined income and credit score establish a low-risk profile. Conversely, the red zone highlights the rejected applicants who fall into the high-risk cluster due to poor combinations of income and credit history.

Here is where the Neural Network did the actual work: rather than relying on hard-coded if/else rules provided by a programmer, the MLP passed the raw input data through its hidden layers. By adjusting its weights during training, it learned the exact mathematical relationship between income and credit score that defines a safe borrower.

The key difference between this and traditional methods is the shape of the solution. A basic statistical model can only draw a rigid, straight line, which would fail to separate this overlapping, diagonal data. The MLP, however, utilized its activation functions to draw a custom, non-linear boundary, accurately separating the 1s from the 0s.

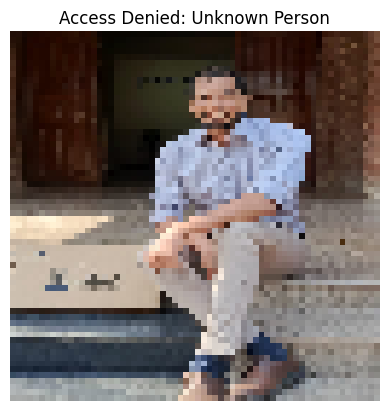

CNN: Custom Facial Recognition

We need to build an automated security checkpoint that uses a camera feed to grant or deny access. Instead of relying on structured spreadsheet data, the network must analyze raw image pixels. The objective is to extract facial features from a photo and determine if the person is an authorized user (“Shabraiz”) or an “Unknown” individual.

The Data Setup: To train this model on custom data, we cannot use pre-packaged datasets. We must structure our raw image files locally so TensorFlow can label them automatically. Before running the code, the project directory is set up exactly like this:

dataset/shabraiz/(Contains 30+ images of the authorized user)dataset/unknown/(Contains 30+ images of random people)

Step 1: Loading and Preparing the Custom Images

First, we load the images, resize them to a uniform 64×64 pixel grid, and split them into training and validation sets.

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

# 1. Load images directly from the custom folders

# TensorFlow automatically labels them: 0 = shabraiz, 1 = unknown

dataset = tf.keras.utils.image_dataset_from_directory(

"dataset",

image_size=(64, 64),

batch_size=16,

label_mode='binary'

)

# 2. Split into Training (80%) and Validation (20%)

train_size = int(0.8 * len(dataset))

train_dataset = dataset.take(train_size)

val_dataset = dataset.skip(train_size)Step 2: Building the CNN Architecture

Next, we define the hidden layers. Because we are processing images, we use Convolutional and Pooling layers to extract structural features before making a final decision.

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Flatten, Dense, Rescaling

model = Sequential([

# Standardize pixel values to scale between 0 and 1

Rescaling(1./255, input_shape=(64, 64, 3)),

# Convolutional & Pooling Layer 1: Detects basic edges and geometry

Conv2D(16, 3, activation='relu'),

MaxPooling2D(),

# Convolutional & Pooling Layer 2: Isolates specific facial features

Conv2D(32, 3, activation='relu'),

MaxPooling2D(),

# Flatten the 2D spatial grid into a 1D data column

Flatten(),

# Fully Connected output layer for the final 1 vs 0 decision

Dense(64, activation='relu'),

Dense(1, activation='sigmoid')

])

model.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy'])

# Train the network on the image folders

model.fit(train_dataset, validation_data=val_dataset, epochs=10)Step 3: Testing a Brand New Photo

To prove the network actually learned the facial structures, we pass a completely new, unseen photo into the trained model to generate an Access Granted or Access Denied response.

import tkinter as tk

from tkinter import filedialog

from tensorflow.keras.preprocessing import image

import numpy as np

import matplotlib.pyplot as plt

# 1. Open a standard pop-up window to let you visually select the file!

root = tk.Tk()

root.withdraw() # Hides the messy background window

root.attributes('-topmost', True) # Forces the window to pop up in front of VS Code

print("Please select an image from the pop-up window...")

test_image_path = filedialog.askopenfilename(title="Select a photo to test")

# 2. If a file was selected, run the CNN on it

if test_image_path:

print(f"Loading: {test_image_path}")

# Format it to the required 64x64 size

img = image.load_img(test_image_path, target_size=(64, 64))

img_array = image.img_to_array(img)

img_array = np.expand_dims(img_array, axis=0)

# Execute the prediction

prediction = model.predict(img_array)

# Output the strict binary decision

if prediction[0][0] < 0.5:

result = "Access Granted: Shabraiz Identified"

else:

result = "Access Denied: Unknown Person"

# Render the visual result

plt.imshow(img)

plt.title(result)

plt.axis('off')

plt.show()

else:

print("You canceled the file selection.")

Conclusion

The key difference between this architecture and the previously discussed MLP is how it handles grid-like data. An MLP flattens data immediately, which destroys the spatial relationship between pixels (e.g., losing the structural distance between two eyes). By maintaining the 2D grid structure through its initial layers, the CNN preserves spatial awareness, making it the required architecture for modern image classification and facial recognition systems.